| Installation and Administration | Getting Started | Command Line | Configuration | Eclipse Plugin | Reference Manual |

| Show on single page Show on multiple pages |

|

|

|

Copyright © 2017 Squoring Technologies

Licence

No part of this publication may be reproduced, transmitted, stored in a retrieval system, nor translated into any human or computer language, in any form or by any means, electronic, mechanical, magnetic, optical, chemical, manual or otherwise, without the prior written permission of the copyright owner, Squoring Technologies.

Squoring Technologies reserves the right to revise this publication and to make changes from time to time without obligation to notify authorised users of such changes. Consult Squoring Technologies to determine whether any such changes have been made.

The terms and conditions governing the licensing of Squoring Technologies software consist solely of those set forth in the written contracts between Squoring Technologies and its customers.

All third-party products are trademarks or registered trademarks of their respective companies.

Warranty

Squoring Technologies makes no warranty of any kind with regard to this material, including, but not limited to, the implied warranties of merchantability and fitness for a particular purpose. Squoring Technologies shall not be liable for errors contained herein nor for incidental or consequential damages in connection with the furnishing, performance or use of this material.

Abstract

This edition of the Getting Started Guide applies to Squore 17.1.2 and to all subsequent releases and modifications until otherwise indicated in new editions.

Table of Contents

The following conventions are used in this manual.

| Typeface or Symbol | Meaning |

| Bold | Book titles, important items, or items that can be selected including buttons and menu choices. For example: Click the Next button to continue |

| Italic | A name of a user defined textual element. For example:

Username

: admin

|

Courier New

| Files and directories; file extensions, computer output. For example:

Edit the config.xml file |

Courier Bold

| Commands, screen messages requiring user action. For example:

Username

: admin

|

| > | Menu choices. For example: Select

File > Open

. This means select the File menu, then select the Open

command from it. |

| <...> | Generic terms. For example:

<SQUORE_HOME> refers to the Squore

installation directory. |

Notes

Screenshots displayed in this manual may differ slightly from the ones in the actual product.

The following acronyms and abbreviations are used in this manual.

| CI | Continuous Integration |

| CLI | Command Line Interface |

| DP | Data Provider, a Squore module capable of handling input from various other systems and import information into Squore |

| RC | Repository Connector, a Squore module capable of extracting source code from source code management systems. |

Table of Contents

This document was released by Squoring Technologies.

It is part of the user documentation of the Squore software product edited and distributed by Squoring Technologies.

This document is the Getting Started Guide for Squore.

It is indented as a follow up to the Squore Installation and Administration Guide and will help you understand how to use the Squore user interface to create and update projects. It is divided into several chapters, as detailed below:

Chapter 2, The Tools at Your Disposal provides details on where to find the sample Squore projects.

Chapter 3, Accessing Squore will guide you through your first access to Squore as a user.

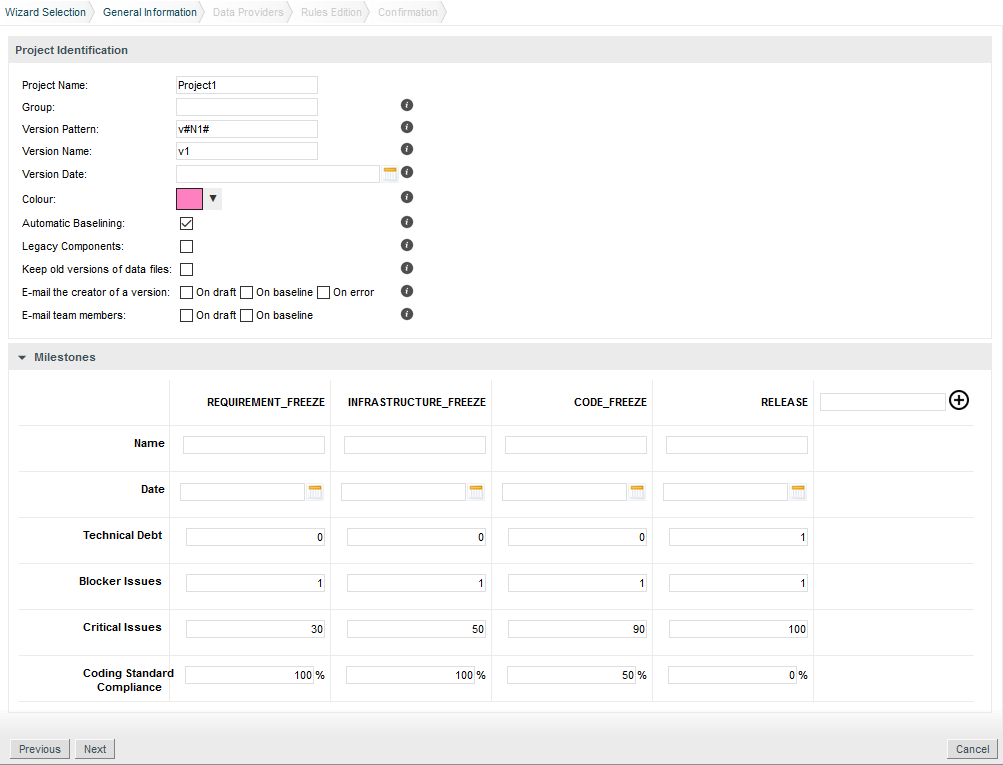

Chapter 4, Creating Projects and Versions covers ways of creating new projects and versions.

Chapter 5, Understanding Analysis Results describes the user interface and functionality you will use in Squore on a daily basis.

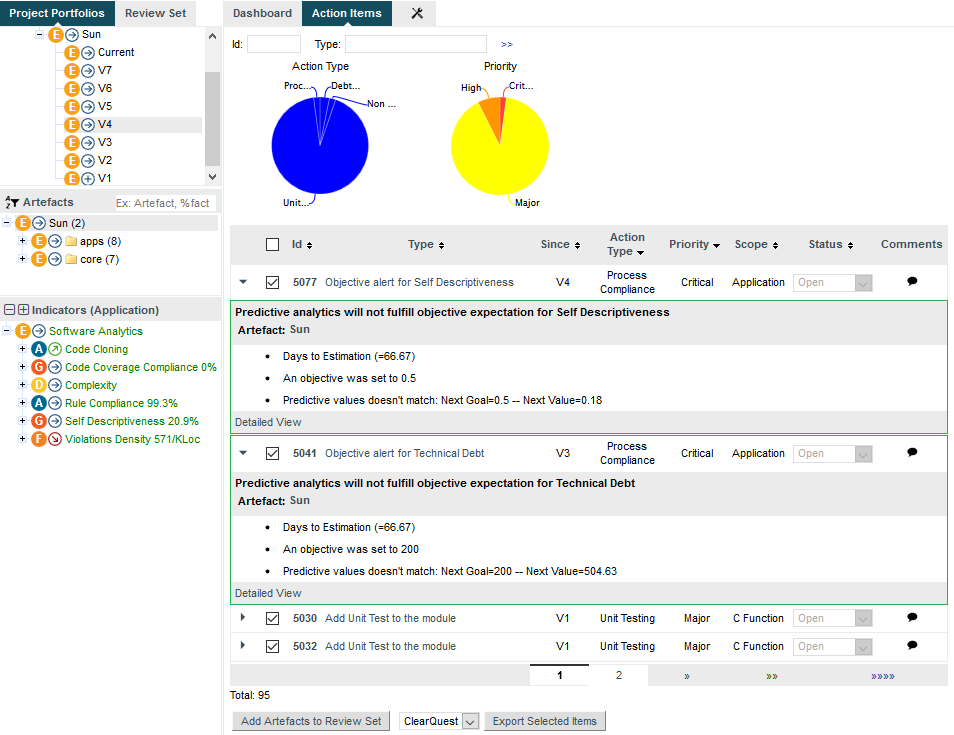

Chapter 6, Managing Your To-Do List With Squore helps you integrate action items suggested by Squore into your workflow.

Chapter 7, Track Your Favourite Indicators shows how you can track your favourite items and consult Squore results on mobile devices.

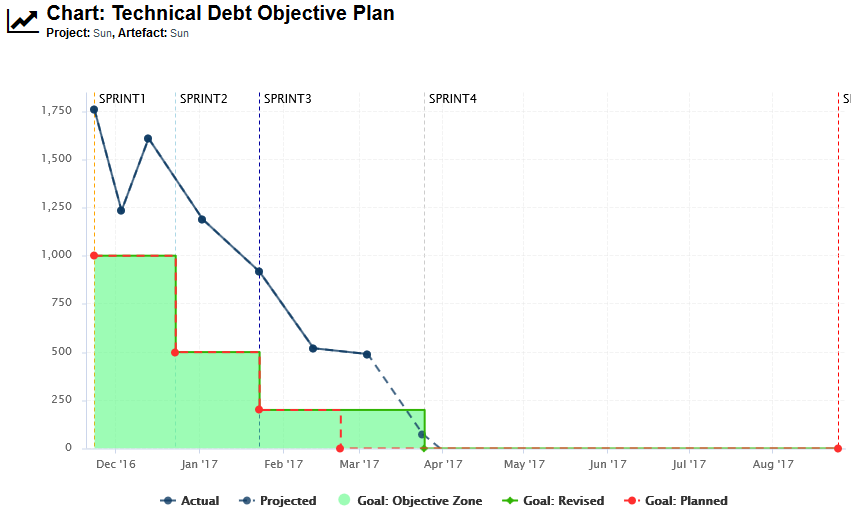

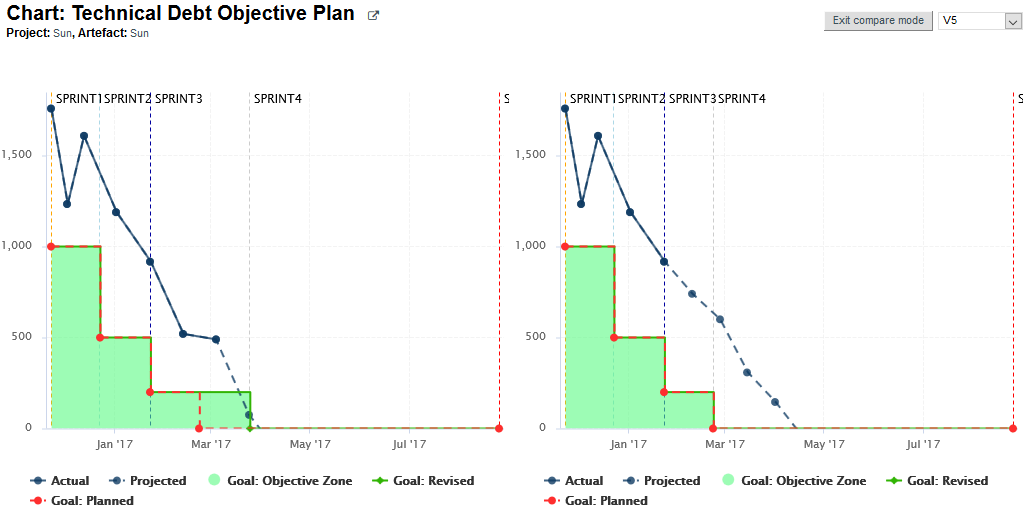

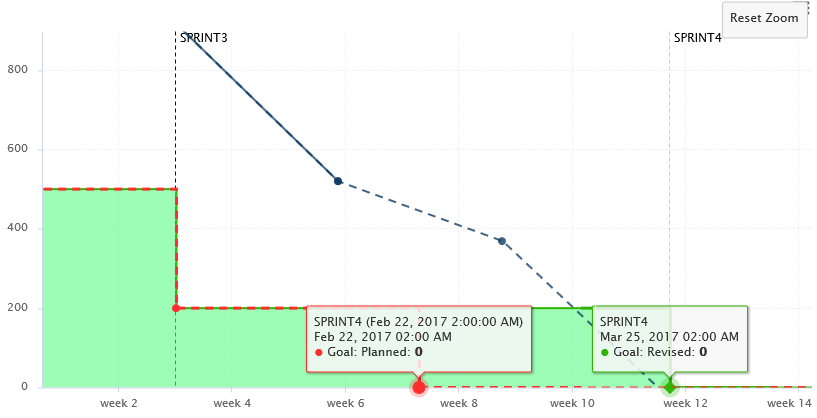

Chapter 8, Focus on Your Milestones guides you through the introduction and management of milestones and objectives in Squore.

Chapter 9, Communicating With Squore covers all reporting features of Squore.

Chapter 10, Keep it Tidy: Project Maintenance in Squore helps you maintain a Squore installation.

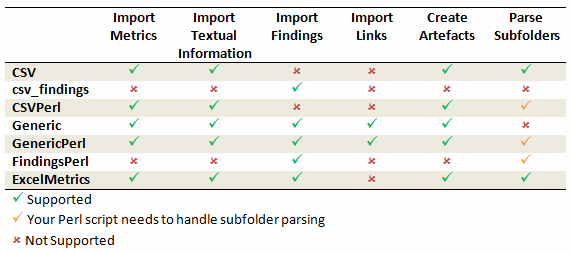

Chapter 11, Repository Connectors and Chapter 12, Data Providers detail the various Repository Connectors and Data Providers you can use when launching analyses.

If you are already familiar with Squore, you can navigate this manual by looking for what has changed since the previous version. New functionality is tagged with (new in 17.1) throughout this manual. A summary of the new features described in this manual is available in the entry * What's New in Squore 17.1? of this manual's Index.

For information on how to use and configure Squore, the full suite of manuals includes:

Squore Installation Checklist

Squore Installation and Administration Guide

Squore Getting Started Guide

Squore Command Line Interface

Squore Configuration Guide

Squore Eclipse Plugin Guide

Squore Reference Manual

If the information provided in this manual is erroneous or inaccurate, or if you encounter problems during your installation, contact Squoring Technologies Product Support: http://support.squoring.com/

You will need a valid Squore customer account to submit a support request. You can create an account on the support website if you do not have one already.

For any communication:

support@squoring.com

Squoring Technologies Product Support

76, allées Jean Jaurès / 31000 Toulouse - FRANCE

Approval of this version of the document and any further updates are the responsibility of Squoring Technologies.

The version of this manual included in your Squore installation may have been updated. If you would like to check for updated user guides, consult the Squoring Technologies documentation site to consult or download the latest Squore manuals at http://support.squoring.com/documentation/17.1.2. Manuals are constantly updated and published as soon as they are available.

Table of Contents

Squore ships with a collection of sample projects that we will refer to throughout this guide. Each project consists of one or several versions of the source code of an application. The code can be found in Squore Server and Squore CLI in the folder <SQUORE_HOME>/samples. If you do not have access to the sample projects, contact your Squore administrator to obtain a copy of the code.

Squore ships with a database that contains two sample users that you can use to familiarise yourself with all the functionality available:

You can use these two default users, but we recommend that you change their passwords after your first connection. The privileges and permissions assigned to these default users can be modified as needed. You can familiarise yourself with Squore permissions and privileges by referring to the section called “Understanding Profiles and Roles”.

You may choose to read this manual from beginning to end, or jump straight to a specific topic. Logging in as the demo user, gives you access to a Tools menu that allows to reproduce the examples shown in this manual. Click Tools > Build Demo Projects and select the Software Analytics - C samples to get started.

The menu is only accessible to the user demo or any user who belongs to a group called demo. Contact your Squore administrator if you do not have access to the Tools to create the sample projects.

If at any moment you have doubts about how a feature works, Squore offers help in HTML and PDF formats. A Wiki and support site are also available.

The Squore online help can be accessed from anywhere in Squore by clicking on the ? > Help menu entry.

The online help is contextual and provides information in a popup window about the page that you are currently viewing in Squore.

The Squore user guides are available in PDF and HTML format by clicking the ? > User Guides menu entry in Squore. You can download a copy for offline use.

The Squoring Technologies Support Wiki provides release notes, known issues and hints and tips for current and past Squore versions. Visit http://openwiki.squoring.com for more information.

Every owner or Project Manager of a project can retrieve the analysis log files for their projects without the need to consult an administrator. This is done by accessing the Manage page for a particular project and viewing the Versions tab (Projects page > Manage icon > Versions tab) as shown below:

Clicking the Log icon opens a page showing the project's client and server logs, as well as configuration and output files will open in a new browser tab.

Clicking the Download link in the Debug info column downloads a zip file of the logs and project data that can be further analysed to understand problems during problem creation.

In order to investigate application failures (rather than project analysis errors), Squore administrators have the possibility to extract the latest log file created by the application. You can access the log if you have administrator privileges by clicking Administration > Server Log in the toolbar after logging in. The log file opens in a new browser window or tab.

Administrators can also get debug information and manage any project created on the server by clicking Administration > Projects, which provides a detailed view of all projects created on Squore Server, on a summary page shown below.

A debug info package contains the following items:

A DataProviders folder containing the output files generated by

each Data Provider run during the analysis.

A [DataProviderName].log file for each Data Provider included in the analysis.

A [projectId]_conf.xml file summarising the project parameters used for the analysis.

A [projectId]_output.xml file containing the output information requested with the --filter parameter during the analysis.

A build.log file containing the information relative to actions carried out on the server during the analysis.

A build_client.log file containing the information relative to actions carried out on the client during the analysis.

A excluded.log file containing the list of all files not included in the analysis and the reason for their exclusion. Note that this file is only generated if some files were excluded.

A table.md5 file containing state information about the analysed source code, if any.

A storage folder containing information about the analysed source code, if any.

If you do not want to download the entire debug package, note that the main log files can also be downloaded individually from the Projects page by clicking on the project status label.

Table of Contents

This chapter walks you through your first access to Squore and covers the web interface and some ways to customise it to your liking.

Before you start working with Squore, it is essential to understand how access management works. The various permissions and privileges that can be assigned to Squore users are grouped in profiles and roles respectively. A set of default roles and profiles is available when you first start the server. You can edit them, or create more as needed.

Use this simple trick to remember the different between a profile and a role:

A Squore user with the Administrator profile can manage users, their roles and profiles. A Squore user with the Project Manager role for a project can create a new version of this project or give access to another user to this project's analysis results.

You can use profiles to grant or deny access to the following Squore features:

Manage Server: Configure the server, access server logs, manage all projects.

Manage Users, Groups and Roles: Complete access to user management on the server.

View Models: Allows users to use the Viewer and the Validator.

Use Capitalisation Base: Provides access to the Capitalisation Base feature to learn from past data in order to improve your model.

Create Projects: Allows users to run analyses.

Modify Models: Allows users to use the Dashboard Editor and the Analysis Model Editor.

Use External Tools: View and use external tools configured by your Squore Administrator. To learn more about this feature, consult the Configuration Guide.

Manage Configuration: Allows users to reload the server configuration from disk.

View Online Help: Allows users to consult the online help from the web interface.

View User Manuals: Allows users to consult the product documentation from the web interface.

Three profiles are available by default, with permissions set as shown below:

Note that a profile can be assigned to a user or a group of users. It is therefore possible for a user be a member of more than one profile. In this case, the user's profile is the combination of all permissions from all the profiles they are a member of.

A role is the set of privileges that a user enjoys in the context of a project. You can use roles to allow users to undertake these actions within the scope of a project:

TODO to Relaxed

for example. Without this privilege, the status is displayed

as a read-only field.Six roles are available by default, with privileges assigned as shown below:

Note that a user can have multiple roles in a project. This allows a user to view the dashboard in the Explorer as a user from another role would. A View As option in the option menu of the Explorer allows to you to switch between the various dashboards available to you. When you have multiple roles in a project, you combine privileges from all the roles that you are a member of.

The owner role is assigned automatically to the user who creates the first version of a project. A project has only one owner, and you can control how much a project owner can see and do by modifying the permissions of the OWNER role. An administrator can transfer ownership of a project to a new user if required.

Your Squore installation runs on

http://localhost:8180/SQuORE_Server

by default. By accessing this page in your browser, you will be

redirected to the Squore login page,

as shown below:

Type in a username and a password and click Login to log in.

To begin using Squore, log in as the demo user with

demo as username and password on the

login page. Click the Login button

and wait for the Welcome page to open.

From the Welcome page, you can automatically return to the last projects or favourite artefacts that you had opened in the Explorer before logging out. You can also get links to the help and other features available for your account.

As the demo user, you are an advanced user of Squore and have access to the following functionality from the toolbar:

Explorer, where you can review your analysis results.

Projects, where you can launch new analyses and manage your projects.

Favourites, where you can view and manage your favourite charts across projects.

Capitalisation, where aggregated statistical data can be found.

Models, under which you can examine all characteristics of your model and edit your dashboards.

Tools, which contains shortcuts to scripts that recreate demo projects. Note

that only the demo user and members of the demo group have access to this menu by default.

<username>, where you can set your preferences and log out from Squore.

?, where online help, user manuals and application information can be found.

Note: If you log in as an administrator of Squore using admin

as the username and password, your will gain access to the Administration

menu where you can configure access management and administer the server.

You can log out of Squore by clicking your user name in the menu bar and selecting the Logout option. Note that if you close your browser without logging out, your session will automatically time out after two hours.

The Squore look and feel can be adapted to your liking, with three provided themes, accessible from the

<username>

menu option. Select one of the available

colour schemes to change the color of the interface. Your changes are saved using a browser cookie.

You can use Squore in various languages. English and French are provided by default, and

your Squore administrator can add more as needed. If you want to change the language of the Squore user interface,

click the

<username>

menu option and click one

of the flags available. The changes are applied immediately and your preferences are saved even after you log out.

Table of Contents

In this chapter, you will learn about the various ways to create a project in Squore: using the UI, using a command line tool or triggering analyses in a continuous integration environment.

Creating a project in Squore is as easy as following a wizard that will prompt you for information about the source material to analyse, and the external Data Providers to add to the analysis results.

The example below assumes that the source code for the sample project used is available on a network share. The path to the source files to analyse is relative to the server.

In order to create a project for the sample application Neptune2, follow these steps:

Access

http://localhost:8180/SQuORE_Server

in your browser.

The log-in page appears.

Log in as the demo user with the login/password combination

demo/demo.

Click the Login button. You are presented with the Squore home page.

Click Projects to switch to the projects view and click Create Project to create the Neptune2 project.

The General Information screen appears.

The General Information screen

If your Squore installation has been customised to provide more than one project wizard, you will see a Wizard Selection screen where you can choose which project wizard to follow. Project wizards allow you to use different analysis models and tools to analyse your projects. For this demo, click the Software Analytics wizard to start creating the project.

The Wizard Selection screen

On this screen, you can enter the information relative to your project in the Project Identification section

The Version Date field allows specifying a custom date for the analysis, so that different analyses can be placed correctly on a timeline later for certain charts in the dashboard. If you leave it empty, then the actual time at which you are running the analysis is used.

The Software Analytics model offers extra parameters below the Project Identification section, but you can ignore them for now.

Click the Next button. The Data Providers screen is shown:

The Data Providers options screen

This screen allows configuring the repository locations and tools that will be used in your analysis.

Set the source code files option to Folder. In the Datapath text box,

type the path to the Neptune2 source code: \\server\share\samples\c\Neptune\W25.

The only Data Provider used in our analysis is Squan Sources, the source code analyser, so you can leave all the other tools unchecked.

If you want to learn more about the available Repository Connectors and Data Providers, consult Chapter 11, Repository Connectors and Chapter 12, Data Providers .

In the Squan Sources parameters, ensure that C is one of the programming languages selected, as shown below:

The Squan Sources Data Provider parameters

Click the Next button to read the Rules Edition screen. This screen allows you to tweak the ruleset available in the analysis model.

The Rules Edition screen

The table displays the entire model's ruleset, which you can filter and sort by data provider or category. Each rule can be turned on or off, and you can click the Edit button to adjust the categories for each rule. Note that any modifications from the original configuration are displayed with an asterisk.

Click the Next button when you are satisfied with your modifications. Note that your modifications are applied for any subsequent analysis of this project and do not affect other projects using the same model.

This screen may not be enabled in your wizard, as your administrator may have disabled it in your configuration. Your administrator can also decide to make modifications to the ruleset that apply to any project created with this model using the Analysis Model Editor. Consult the section called “Analysis Model Editor” and the section called “Using Ruleset Templates” to learn more.

Before launching the analysis, a summary of your selections is displayed. Review the information and click Run to confirm the project creation.

The summary page lists all lists all the options you specified for the project creation and also allows outputting them in various formats so that you can repeat the project creation in command line. For more information about reusing the project parameters in a different context, consult the online help or the section called “Can I Create a Project Via the Command Line?”.

When the project analysis completes, Squore shows you the list of projects. Neptune2 appears in the list, together with information about the current version and its computed rating:

To consult the results of the analysis, click on the project name to view the Squore Dashboard. More information on how to read the Dashboard is available in the section called “Where Are My Analysis Results?”.

Adding a version to an already-existing project is a simple procedure that is carried out from the Projects page.

Follow these steps to create version 2 of your project:

After logging into Squore, click on Projects.

Click the Build

icon

(![]() )

for the Neptune2 project in order to access the source code file options.

)

for the Neptune2 project in order to access the source code file options.

The first screen of the wizard enables you to specify the version name and to modify some of the project attributes if necessary.

Parameters For the New Version of Neptune2

Click the Next button to reach the project language and source settings screen. On this screen, you can modify the path to the source code and point to the newer version. Note that by default, Squore displays the path used when analysing the last version. Leave the path as it was for version 1. We are going to create a version that analyses the same code in this example. If you scroll down to the code analysis option, you will notice that some of them are now disabled. This is because the project configuration was set in version 1 and is not allowed to be modified in subsequent analyses. This ensures that your project is scored using the same criteria every time you analyse new code.

Unavailable options when creating version 2 of a project

Note: You can add new sources to the project at this stage if needed. Read more about projects using sources spread over multiple locations in the section called “Can I Create Projects with Sources From Multiple Locations?”.

Click Finish and Run to launch the analysis of Neptune2 V2. When the analysis finishes, Neptune2 V2 will be listed in the list of projects on the Projects page.

This section covers an essential workflow feature of Squore: baselining. While it is possible to keep every version of a project created in Squore, you may want to permanently keep analysis results only for particular milestones and work with an always updating draft version.

You can decide whether a version is a draft or a baseline when you create it, or after the analysis is finished.

The most important thing to remember about a draft version is that it is a snapshot of your data at a given time. You can use it to compare the evolution of your project against the last baseline created. There is therefore only one draft version available per project (the latest version), which Squore creates automatically if your previous version was a baseline. A baseline version, on the other hand, is permanently saved and will not be overwritten the next time an analysis is launched.

When you create a draft version, it is always called Current and can be modified in several ways:

Forms can be updated

Attribute values can be modified so that a new value is taken into account in the next analysis

Artefacts can be manually added, modified or deleted

Folders and files can be relaxed or excluded from the project

Action Items can have their status changed

Rules and individual violations can be relaxed

Being able to view draft versions of a project is a user privilege that can be granted to users of a particular role, and so is the ability to baseline a project. For more information about roles, refer to the section called “Understanding Profiles and Roles”. This means that as a project manager, you can give access to every version to users within your team, but can restrict the project visibility to the rest of the company to show them only milestone versions (the ones you baselined). You can also decide which members of your team are allowed to change the status of a version from draft to baseline.

Use the Automatic Baselining option on the General Information screen of the project wizard to create a draft or baseline as follows:

You can use the Baseline option on the Projects page to create a baseline version of the current draft as follows:

) next to the project you want to baseline.

) next to the project you want to baseline.After confirming the baseline creation, you are redirected to the Projects page and the last draft version becomes the new latest baseline. All changes made manually to artefacts and findings are kept, and will be incorporated the next time an analysis runs. Note that baselining is only available for users whose role allows the Baseline Projects privilege. For more information about roles, consult the section called “Understanding Profiles and Roles”

Baselining manually is useful if you have reviewed the current draft and have not made any changes to the analysis results. If you have modified form entries or relaxed artefacts and findings in a way that should impact the rating, consider launching a new build or using the Apply Changes button instead of baselining. See the section called “Handling Manual Modifications” for more information.

When you have made changes to form values or you have relaxed artefacts and findings in the current draft, there are two ways to get these changes reflected in the dashboard:

running a new analysis

clicking Apply Changes

Running a new analysis will allow you to change the source code repository settings and input files for data providers, or keep them. You can choose if this new analysis should produce a baseline or a draft version. In all cases, the artefacts you relaxed or excluded, the action items you modified and the findings you relaxed are taken into account to produce the rating of the new version.

Clicking Apply Changes ( ) allows you to merge the manual modifications to artefacts, forms, action items and findings into a new

draft version without reanalysing source code and re-running data providers. Manual modifications are simply merged with the already-

existing results to update the rating, which is a lot faster than running a full analysis. Note that Apply Changes is not available when you have excluded artefacts.

) allows you to merge the manual modifications to artefacts, forms, action items and findings into a new

draft version without reanalysing source code and re-running data providers. Manual modifications are simply merged with the already-

existing results to update the rating, which is a lot faster than running a full analysis. Note that Apply Changes is not available when you have excluded artefacts.

You can also use Apply Changes after modifying your analysis model to migrate a project to the new version of a model without running a full analysis.

There are three types of changes you can make to Squore projects:

Changes to attribute values

Changes to source code locations

Changes to some of the Data Provider options

Project attributes are always editable when creating a new version of a project, except for the name of the project.

The location of the source code can always be modified. When editing a project, you can also add more source locations as needed, following the steps described in the section called “Can I Create Projects with Sources From Multiple Locations?”.

Whether you can edit the settings used in the Data Providers for the project depends on their ability to support edits. This ability is defined by a Squore administrator via the configuration of the Squore wizards. For more information, refer to the Squore Configuration Guide.

Instead of creating a project from the Squore web interface, you can create a project directly from the command line using Squore CLI. Squore CLI is a client for Squore that enables you to create and analyse projects locally and send the results to Squore Server. Alternatively, you can use Squore CLI to instruct Squore Server to carry out the analysis.

If you have installed Squore CLI on your computer, you can call it using Java, passing the parameters you would have passed in the web interface to create projects. The following is an example of the command line you can use to create a project using Squore CLI on Windows:

@echo off java -Dsquore.home.dir="%SQUORE_HOME%" ^ -jar %SQUORE_HOME%\lib\squore-engine.jar ^ --url=http://localhost:8180/SQuORE_Server ^ --commands=DELEGATE_CREATION ^ --name=Mars2 ^ --repository "type=FROMPATH,path=\\server\share\samples\c\Mars2\V3.2.6" ^ --color=rgb(103,25,237) ^ --version=1.0 ^ --login=demo ^ --password=demo ^ --filter=APPLICATION,MEASURE,LEVEL ^ --wizardId="ANALYTICS" ^ --dp "type=SQuORE" echo done pause

The example above shows how to specify commands, parameters and project options to Squore CLI. This would create a project named Mars2 in version 1.0, analysing source code located in \\server\share\samples\c\Mars2\V3.2.6 with the Data Provider SQuORE (the internal name for Squan Sources).

You can find more information about using Squore CLI in the Command Line Interface manual, which explains how to install the client and create projects.

If you use a Continuous Integration tool like Jenkins or CruiseControl, you can add Squore to your build process and analyse projects every time your code is compiled. This requires the installation of Squore CLI on the continuous integration server, and is therefore described in greater details in the Command Line Interface Manual.

The source code analysed by Squore does not have to be located on the same machine as Squore Server or Squore CLI. When you create a project, you get the option to choose from a range of Repository Connectors to pull source code from:

Direct file system access (local drive, network share, mass storage media...)

Zip upload

A ClearCase view

A CVS checkout

Git cloning

An Integrity repository

A Perforce depot

A Subversion revision

A Synergy database

A TFS server

Each option requires different parameters, which can be specified from the project wizard, or via the command line. For more information, refer to Chapter 11, Repository Connectors.

Squore provides support for analysing projects whose sources are

spread over several locations or version control

systems. If your source code resides in

/products/common and

/projects/myproject,

you can specify these two locations in the

Squore project wizard by clicking the

Add Repository

button. Similarly, if some of your code is managed by a

SVN repository and the rest is handled by a Git server,

you can configure both locations as part of the same project,

as shown below:

A project using sources from two SVN repositories and a network drive

Now that you have created a project, you are ready to start reviewing the analysis results in the main section of Squore, the Explorer, which consists of a set of trees for browsing through project artefacts and various dashboards to display the information associated with these artefacts.

The Squore Explorer

Common icons are used throughout the explorer to indicate the rating of a component and its evolution compared to the previous version. The image below shows the meaning of the different icons used:

The Squore Explorer icons

The left-hand part of the Explorer is a three-panel section containing expandable trees.

The Tree Pane

The top panel contains the Project Portfolios and the Review Set.

The Project Portfolios is a list of all the projects you have access to, grouped by analysis model. Each project is listed with its latest rating and evolution and can be expanded to show all versions of the project that were analysed with Squore.

The Review Set is a flat list of artefacts you collect from various projects in order to review them. This list is saved when you log out and log into Squore again.

The expanded Earth project, rated E, and its 7 versions in the Project Portfolios

The tree in the middle panel is the Artefact Tree . When Squore analyses a project, it breaks it down into artefacts of various configurable types, for example APPLICATION, PROGRAM, FOLDER, FILE, CLASS or FUNCTION, according to the analysis model used. The artefacts in the tree are displayed for the version selected in the Project Portfolios. clicking a different version of a project refreshes the artefact tree with the ratings for the version just selected. Above the artefact tree are tools for filtering, pinning, sorting and searching artefacts. Each artefact is displayed with its current rating and can be expanded to reveal child artefacts if available. The number in brackets indicates the amount of child artefacts for the current artefact. You will learn about these tools later in the section called “Has the Quality of My Project Decreased Since the Previous Analysis?” and the section called “How Do I Find and Keep Track of Artefacts?”.

The Artefact Tree for version 6 of the Earth project

The bottom panel is the Indicator Tree , in which ratings for the indicators defined in the analysis model at the current level are displayed. Each indicator can be expanded to display the rating of each of its sub-indicators. The Indicator Tree displays statistics for the artefact currently selected in the Artefact Tree and refreshes when the selection is changed. The type of artefact selected in indicated in brackets. Two shortcut buttons can be found above the top node to quickly expand and collapse the entire tree.

The partly expanded Indicator Tree for version 6 of the Earth project at Application level

Clicking one of the tree nodes reveals more information about the indicator, including the formula used by Squore to compute its value and rating.

The popup displayed when clicking the Complexity Distribution indicator at application level

The right-hand side of the Squore Explorer contains a series of tabs, the first of which is the Dashboard. The Dashboard is dynamic and always displays information about the artefact currently selected in the artefact tree. There is not one Dashboard, but a Dashboard per node in the tree. Additionally, the Dashboard can be customised by a Squore administrator so that users see a different Dashboard according to their role in a project, thus highlighting different information for project managers, quality engineers and developers for example. Ask your Squore administrator about Dashboard customisation, or refer to the Squore Configuration Guide for more information.

The left-hand area of the Dashboard contains the score card , which consists of a graphical representation of the key performance indicator for the current artefact, and some tables highlighting key metrics about the project.

The score card area

Each table lines display a series of details about the key performance indicator:

The name of the metric (e.g. Rule Compliance). When clicked, a popup shows the way the metric is computed. Optionally, some metrics may allow an extra link to be displayed. This link shows the list of findings taken into account when calculating this metric (See Non Conformity Count).

The raw value of the metric and its evolution according to the previous version (e.g. -99.77% =). Clicking a value ion this column displays a chart of the history of the last 10 values recorded for this metric.

If the metric displayed is an indicator, the rating of the indicator is displayed, along with its evolution (e.g. Level A, stable). If the metric is a measure, then an information icon is shown. In both cases, you can click the information in this column to display more details about how the metric is computed.

The right-hand area of the Dashboard contains a series of charts representing key information about the current artefact. Clicking a graph opens a larger version of the image so you can analyse the data. Note that the available charts will differ depending on the type of artefact selected in the tree. Files and functions for example include a Source Code chart (for users who have the privilege to browse source code), which does not appear in the Dashboard for folders and applications.

The charts area

The Dashboard is only the first of a series of tabs in the Explorer. In the following chapter, you will find out more about the role of the Action Items, Highlights, Findings, Forms, Reports, Indicators, Measures and Comments tabs. Note however that like the Dashboard, the information displayed in each tab is always relative to the node currently selected in the Artefact Tree.

In contexts where your projects reuse code from other projects that you also analyse in Squore, you can create a meta-project that will show the analysis results from the various software bricks in a single project.

This feature is not enabled by default in the standard configuration, so consult your Squore administrator to make the necessary changes to your model, following the instructions described in the Configuration Guide.

Selecting a wizard for building meta-projects

When you select a wizard that allows to create a meta-project, you do not have to specify any locations for source files or any data providers to run. Instead, you are presented with a project picker that allows you to tell Squore which sub-projects compose your meta-project. In the example below, we will create a project that uses Earth (V2), Mars (v3.2.6), and Saturn (Prel) as its parts. You can choose any baseline version of any project you have access to in Squore as a component of your meta-project.

Building a new meta-project with code from Earth, Mars and Saturn

When the analysis finishes, the meta-project is listed along with the other projects in the Project Portfolios. You can expand the Artefact Tree for your meta-project to browse the artefacts of the three sub-projects that are part of your meta-project, and consult all their Action Items, Findings and Highlights.

The dashboard for the meta-project Solar, composed of Earth, Mars and Saturn

Table of Contents

This chapter describes the main features available in the Explorer. By the end of the chapter, you should be able to make the most of the information and decisions presented by Squore and start applying them to improve your development practices.

After completing the analysis of a new version of your project, you will probably want to investigate how it has evolved, more specifically for which artefacts the quality has decreased. Let's look at the history of the Earth Project (which should be available if your Squore administrator has created the sample projects shipped with the Squore installation) to find out how to spot the worst-scored components in your project.

Log into Squore as the demo user using demo/demo

and observe the evolution of the Earth project in the

Project Portfolios:

The versions of the Earth Project

The trend arrows before the version names in the the Project Portfolios indicate that the overall rating has not changed (More information about the quality indicator icons is available in the section called “Where Are My Analysis Results?”.). If you take a closer look at the Technical Debt Trend chart however, you can notice that the technical debt is growing for this project.

The Technical Debt Trend chart for the current version of Earth

Since the trend accelerated in V6, we will focus on this version. Let's click V6 in the tree and start our evaluation by looking at the score card, which rates Earth at E.

Let's also look at the Developer dashboard, which offers more insight into coding violations. Select View As > Developer in the Explorer menu:

The View As menu allows to switch roles in the project

Some values under Artefact Counting, Line Counting and Issue Distribution explain the lower score: the application contains more files and functions, more lines of code, less comments and more rules violations.

The score card for the version V6 of Earth

By now you probably want to find out which components in your project are responsible

for increasing the technical debt the application in this version. If you want the Artefact Tree to

reflect this information, you can change the sort order to show the worst scores first

by clicking on the sort icon

( )

and selecting

LEVEL > Worst first to display artefacts from worst-scored to

best-scored.

)

and selecting

LEVEL > Worst first to display artefacts from worst-scored to

best-scored.

In this section, you will learn to look for artefacts the hard way by using filters and search. For a more automated way to find artefact that fit a specific category, take a look at the section called “Finding Artefacts Using Highlights”.

Click the Filter icon

(![]() )

above the Artefact Tree to show the filter options and select only the levels F and lower:

)

above the Artefact Tree to show the filter options and select only the levels F and lower:

The filter popup with the boxes checked to filter artefacts rated F and lower

Click Apply to apply the changes, and the Artefact Tree should refresh to show only artefacts in the levels selected, as shown below:

The filtered Artefact Tree showing artefacts rated F and lower

The notice Filter active is always displayed above the Artefact Tree when you are using a filter. The tree now only shows artefacts rated F and lower, along with their ancestors (i.e. their parents and ancestors), even though the ancestors may not be rated F and lower.

While a filter is active, you can still search for other artefacts by typing a search term in the search box. Try typing ma in the search box above the Artefact Tree, and watch the search results list get populated as you type:

The search results for the term entered in the search box

If you select the first search result in the list above,

you will open the dashboard for machine.c.

Let's go back to our filtered tree. The filter singled out two artefacts with the

required score range: player.c and

robot.c. The artefact player.c deteriorated in

version 6. Click on the artefact player.c to view its

dashboard. Note how the score card indicates that the artefact has more blocker issues than

in the previous analysis.

One more blocker issue for player.c in this version

You can click the link in the table to directly view the new blocker violation on the Findings tab. In this case, the rule NOFALLTHROUGH was broken:

A new blocker issue for player.c in this version

Another convenient way to try and find why a project's quality is deteriorating is to filter on the trend of an artefact.

Select the current version of Earth again and edit the active filter: Uncheck the boxes for levels F and lower, and select

the Deteriorated category in the Evolution column.

When applying the filter, you should see the artefacts in the tree

that have the  icon

next to their name.

icon

next to their name.

The artefacts that deteriorated in the current version of Earth

The functions you looked at earlier are still here, but there are more deteriorated artefacts

that you can start reviewing. If you click on

hi_scores_disp(int) for example, which is rated E.

In order to find out where the degradation took place, you can look at the indicators tree to understand where the decline in quality comes from. Expand all the nodes in the indicators tree to reveal the issues with the artefact:

The Indicator Tree for hi_scores_disp(int)

Squore makes it easy to spot the irregularities quickly, like the fact that the Maintainability Non Conformity indicator is one of the causes for the worse score in this version. This is probably the first item to review in this function.

Take a look at the Coding Rules Compliance section of the artefact's scorecard to confirm the results:

The scorecard for hi_scores_disp(int)

By clicking the link icon, you can directly view the violations for this artefact in the Findings tab.

You can dive further into the analysis results by looking at the information contained in other tabs and assign action items to your team by referring to Chapter 6, Managing Your To-Do List With Squore or report your progress as explained in Chapter 9, Communicating With Squore.

In the previous section (the section called “Finding Artefacts Using Filters and Search”), you got familiar with searching and filtering to find the artefact that have a negative impact on the overall rating of a project. in this section, you will learn to master the Highlights functionality, which aims to make the process of finding certain categories of artefacts easier.

Highlights are flat lists of artefacts ordered in predefined categories for a model.

Let's try to confirm our findings about the worst and most deteriorated artefacts in Earth. Click on the current version of Earth and clear the filter. Click the Highlights tab of the Explorer and select the Top 10 worst artefacts category. The list appears as shown below:

The Top 10 worst artefacts in the current version of Earth

The list confirms that the artefacts with the worst rating are player.c and robot.c. The Highlights table shows you the artefact rating, name and path, and allows you to go to the artefact's dashboard directly by clicking the artefact name.

Now you can also find the deteriorated artefact hi_scores_disp(int) that you identified with a filter earlier in the section called “Finding Artefacts Using Filters and Search”: select the Top 10 most deteriorated artefacts highlight category to see the artefact appear in the list of deteriorated artefacts in this version.

The Top 10 most deteriorated artefacts in the current version of Earth

Artefacts are sorted by degradation, i.e. the difference between the value of the main indicator in the previous baseline version compared to the current value. By clicking the Export button, you can export the selected items to a CSV file. If the Export button is greyed out, your licence does not include the option to export data to CSV files.

By default, the list of most deteriorated artefacts is compiled based on the previous version. By using the Reference list

in the Explorer Settings menu ( )and choosing another reference version, you can update the statistics based on the

new reference version you just selected.

)and choosing another reference version, you can update the statistics based on the

new reference version you just selected.

Note: The notions of baseline and draft versions is explained in the section called “Working with Draft and Baseline Versions”.

Squore administrators can customise and expand this list by referring to the Squore Configuration Guide.

For some projects, you may want to collect artefacts so you can review them later. Squore enables you to build a Review Set, a list containing artefacts that you want to keep track of. Let's log in as the demo user to review all the new artefacts added to a project, in order to evaluate their level of quality.

Isolating the new artefacts can be done in three steps:

Log in using the demo user (demo/demo).

Click on version V6 of Earth in the Project Portfolios to display the dashboard for the last version of the project.

Click the Filter icon to display only items in the Evolution column with the status New and apply your changes

You should see the following artefacts in the Artefact Tree:

The new artefacts in the current version of Earth

Squore makes it easy for you to keep track of these artefacts. Click on the icon above the Artefact Tree and select Add Filtered Results to Review Set.

You can now clear your filter, the artefacts you want to review are stored in your Review Set. Click the Review Set tab in the left pane of the explorer to find the items you just saved.

The Review Set filled with new artefacts for the new version of Earth

At any moment, the artefact currently selected in the Artefact Tree can be sent to the Review Set as well. Simply display the context menu for an artefact and click Add to Review Set to add it to the Review Set. Clicking an item in the Review Set pane has the same effect as clicking it in the Artefact Tree: the dashboard refreshes to show the information for that artefact. You can use the left and right arrows in the Review Set pane to go to the previous and next artefact in the list.

If you want to know more about what actions you can take after reviewing artefacts, refer to Chapter 6, Managing Your To-Do List With Squore and Chapter 9, Communicating With Squore.

Squore provides tools to understand, verify and enhance your model under the Models menu.

The Viewer, a graphical representation of all the analysis models on Squore Server

The Validator, a debug tool for model writers

The Dashboard Editor, which allows customising the dashboards that all users will see

The Analysis Model Editor, which allows modifying the model's ruleset

Users whose profile grants the "View Models" permission have access to the first two tools.

Users whose profile grants the "Modify Models" permission have access to the last two tools.

To use the Viewer:

Upon selecting the parameters above, the page is refreshed with the top-level indicators in the model, and you can click each indicator to unveil sub-indicators and their characteristics. You can drag the tree left and right to reveal all sub-levels if necessary. For each indicator selected, Squore displays the following information:

Target is the target artefact type for the selected item

Type is the type of the selected item

Mnemonic is the short code for the selected item

description is the description of the selected item

Data Provider is the Data Provider responsible for computing the selected item

MeasureId is the measure ID of the selected item

Computation is the formula used to compute the value of the selected item

ScaleId is ID of the scale associated with the selected item

Levels is the list of levels available for the selected item and their ranges

Note: The information available depends on the type of item selected.

If your work involves adjusting the model's metrics or dashboard, you can use the Validator to verify its integrity during as you make changes. Click Models > Validator to display the diagnostics organised by category, as shown below:

The software_analytics model in the Validator

The Summary tab displays a summary of all the diagnostics run for each category. By clicking any of the other tabs, more details are shown about potential problems found in your model. You can also show the complete XML of the model to understand the errors reported. The XML can be searched by using the Ctrl+F key combination to bring up the search dialogue, and then Ctrl+G to search for the next occurrence of the search term:

The Validator reporting errors

Your Squore administrator can help you get more information model development. You can also refer to the Squore Configuration Guide for a complete reference.

The Dashboard Editor is a graphical editor for the dashboards of a particular model. Dashboards consist of a key performance indicator, a list of tables and one or more columns of predefined charts. With the Dashboard Editor, you can rearrange the information shown on the dashboard for all users, or create a completely new dashboard for a new role in your project.

In order to use the Dashboard Editor:

Click Models > Dashboard Editor

Select a model and load an existing dashboard

The current dashboard skeleton is loaded in the editor, as shown below:

The Software Analytics model in the Dashboard Editor

The tree contains the list of pre-configured graphical elements that you can add to your current dashboard. When you hover over a dashboard element, a tooltip explains what metrics it displays. You can drag and drop an element over an existing chart on the current dashboard, drag charts and tables to rearrange them. When you are satisfied with your changes, you can save your modifications. You can also create a new dashboard, using an existing one as a basis for the new one, or start from a blank canvas.

In the tree, graphical elements are colour-coded so that you know before you add them to your dashboard if they are compatible with your model. Blue elements have minor incompatibilities with your current model and orange elements are likely not compatible. Use the tooltip for an element to understand why the element is flagged as incompatible.

More detailed explanations about the Dashboard Editor can be found in the Squore online help.

The Analysis Model Editor is a graphical ruleset editor where you can turn rules on and off, or adjust the categories associated to each rule in your model.

It also allows you to save ruleset templates so that you can use a different set of rules for each project you create

In order to use the Analysis Model Editor:

Click Models > Analysis Model Editor

Select a model to load its ruleset

The entire ruleset for the current model is displayed in table form, as shown below:

The Analysis Model Editor displaying the ruleset of the Software Analytics model

Use the filter pane and the table headers to find the rule you want to modify. You can activate or deactivate a rule by clicking the on/off switch in the table. If you want to make more modifications, cick the Edit icon for this rule.

You can edit multiple rules at once by checking several rules and using the actions list at the bottom of the page. When you save your changes, the configuration is reloaded and every new analysis for this model will use the new settings.

Changes made in the web interface are saved in the configuration folder on the server in a file called editor.xml.

Using the Analysis Model Editor, you can set up various ruleset templates to modify or ignore rules that do not apply for certain departments or project teams within your organisation.

Users with model edition privileges (see the Modify Models permission in the section called “User Profiles”) can define templates right from the Analysis Model Editor. Project managers can decide to modify existing templates or create new ones from the project wizard. In order to ensure that projects are analysed using company-wide standards, templates can be marked as approved, which prevents them from being modified by project managers.

In order to create a ruleset template:

Click on Models > Analysis Model Editor

Select an analysis model and locate the Template selection list above the filter tools. For a model where no templates exist yet, only the Duplicate As button is available so can can create a new template from the default one.

The ruleset template tools for a model with no custom templates yet

Click Duplicate As to create a new template and enter edit mode. In this example, we are creating a ruleset that contains only rules that apply to the Python programming language. By checking the Approved box, we are defining this ruleset template as read-only for project managers.

Creating a new python-rules ruleset template

Activate, deactivate or modify any rule you want for the template. In this example, we use the filter tools to select all Data Providers, turn off all the rules, and then select the Python-related Data Providers to activate all their rules

The python ruleset template includes the Pylint, pep8 and Squan Sources rulesets

When you are satisfied with your rule selection, click Save to save the template. It now appears in the template selection list. You can still modify it as needed, or click Duplicate As to start creating a new template based on your first template.

The saved python ruleset

Project managers can start using your template immediately by selecting it in the Ruleset Edition page of the project wizard, which is displayed after the Data Provider selection screen:

The template selection in the project wizard

Templates can also be applied to projects from the command line using the --rulesetTemplate parameter:

--rulesetTemplate="python-rules"

Project Managers may be interested in monitoring several projects as a whole. Squore provides a special dashboard view which compounds information about several projects into an analysis model dashboard, which can help you prioritise projects according to their current status.

In order to view the analysis model dashboard:

Log into Squore with the demo user.

Click the model name "Software Analytics" in the Project Portfolios.

The dashboard refreshes to show the compounded information for all projects analysed with this model using Quadrant charts and a summary table of the main indicators, the rating and the trend of each projects. and tables:

The analysis model dashboard for Software Analytics projects

In the quadrants, each project is represented as a bubble. Two indicators define the horizontal and vertical position of the bubble along the axes, while a third indicator defines the bubble size. Let's see how you should prioritise maintanance work on the your project portfolio for the sample projects. Click on the Complexity Volume Vs Cloning quadrant to view the full version:

In this chart, projects with a high code cloning ratio appear higher, while more complex projects appear more to the right. The size of each bubble indicates the size of the project in terms of source lines of code. Therefore, it may be easier to improve the quality of a project with a more cloning but less complexity like Pluto (dark green) than a project with less cloning but more complexity (Mars, in red) As a project manager, you know that as a general rule you need to focus on moving projects towards the bottom-left corner of the chart for a healthy portfolio of projects.

Below the quadrants, Squore displays tables with the values used in the charts so you can refine the information read in the charts. All the information shown in the analysis model dashboard can be configured by a Squore administrator. Refer to the Squore Configuration Guide for more information.

Table of Contents

The analysis results you obtained by creating your first projects in Chapter 4, Creating Projects and Versions and observed in Chapter 5, Understanding Analysis Results can be drilled down further by looking at the other tabs available in the Explorer. In this chapter, you will learn how to use the information contained in Indicators, Measures, Findings and Action Items to better understand and reuse the information provided by Squore in your development workflow.

If you need more background information about the measures and indicators used in the charts and tables in the dashboard, the Indicators, Measures and Findings tabs can provide more details about the statistics recorded for the current artefact. Note that these tabs are not displayed by default. If you want to show them in Squore, click the Explorer Settings menu and then Manage Tabs to display the Tab Manager to enable these tabs, as shown below:

The Manage Tabs option in the Explorer Settings allows to display the Tab Manager

The Tab Manager allows to display tabs hidden by default by checking them. Note that not according to your configuration, some tabs may not be removeable

If you want to understand the scale used for a particular indicator, to see for example how close you are to moving up the rating scale, you can check the scale used for this indicator in the Indicators tab of the dashboard.

Log in and search for the artefact DB5_backup.c in the Neptune project, where the indicator Maintainability Non-Conformity is rated E. While this tells you about the current rating for this artefact, this does not tell you how to improve it. In order to learn how to improve this score, let's first take a look at the scale used for this indicator. Click the Indicators tab of the Explorer. The table of indicators opens, as shown below:

The indicators table for DB5_backup.c

The table lists all the indicators available for the artefact over several pages. The scale and levels available for an indicator can be viewed in a tooltip by placing your mouse over a rating. Using the filter above the "name" column, look for the entry named Maintainability Non-Conformity, then click its value in the rating column. The scale for the indicator indicates that the artefact is rated E because the value of the indicator is 472.09. In order to improve the score, the value would need to decrease to under 250 to be rated D, as shown below:

The scale used for rating Maintainability Non-Conformity

To understand how to improve the rating, you need to know how the indicator's value is computed. Clicking the indicator name in the Indicator Tree shows the following explanation in the indicator popup:

The indicator popup for the Maintainability Non-Conformity indicator

The computation, i.e. the formula used to calculate the rating is

1000*(WEIGHTED_NC_MAI/ELOC), meaning that the indicator

computes a ratio of broken Maintainability rules.

To find out what these rules are, click the

Findings tab.

Squore displays all the findings for a particular artefact in a table in the Findings tab. Next to the finding's label is a number of occurrences followed by a colour-coded delta value (red for more occurrences, green for less) compared to a previous analysis.

If you want to find out which rules

are taken into account by the Maintainability Non-Conformity indicator, click the

>> button next to the default filter to show the advanced filtering options. Highlight Maintainability

in the ISO Characteristics filter to see the corresponding rules, as shown in the picture below:

The findings table for DB5_backup.c

You can filter violations according to many criteria, including relaxation status, origin, artefact type and other characteristics from the analysis model

The rules BWGOTO, STDIO, NOGOTO, RETURN and COMPOUNDIF are the rules that should be fixed in order to improve the Maintainability rating of DB5_backup.c.

You can expand the BWGOTO rule to show each occurrence of the rule being broken, and also review the location in the source code that breaks the rule, as shown below:

The location of the broken occurrences of the BWGOTO rule

The list of findings indicates if a finding is New, Closed or Modified since the reference version. Findings are traceable through time, so even if your code is modified, you can to go back to the version in which it was first detected.

Finally, clicking on the line number for each rule breaking occurrence opens the source code viewer in full screen so you can carry out your code review:

The source code viewer allows comparing the code against another version of the code. Select a version name in the Compare to: list to switch to diff mode, as shown below:

The source code viewer in diff mode

In diff mode, use the top arrows to switch the left and right panes, and the bottom arrows to turn synchronised scrolling on or off. Characters that were removed are underlined in green, while characters that were added are underlined in red.

Analysing findings helps improving the quality of the code in your project. There is much more you can do with the Findings tab by using the built-in filters to detect regressions and improvements:

Violations: displays all the rules violated in this version

Lost Practices: displays violations that are new in this version since a specified version

Acquired Practices: displays all violations not occurring anymore in this version since a previous version

Deteriorated Practices: displays all violations with more occurrences in this version than in a previous version

Improved Practices: displays all violations with less occurrences in this version than in a previous version

New violations: displays all the new violations since a previous version

Fixed violations: displays all the violations fixed since a previous version

All changed violations: displays all the rules where a change in the number of violations was detected, essentially providing the combination of New violations and Fixed violations in one list

All rules: displays all the rules checked by the model, i.e. the violated ones as well as the ones that are not

By default, the Findings tab displays violations compared to the previous analysis, but you can refine the search by adjusting the Reference drop-down list (under the Explorer Settings menu) that contains all the versions analysed in your project.

You can learn about more automated ways to review and fix code in the section called “How Do I Review And Manage Action Items Flagged by Squore?”.

You can click the Export button at the bottom of the list of findings, to generate a CSV file of the findings displayed in the user interface. The contents of the file reflect your current filter selections on the page. The following is a CSV export for the Findings of the Earth project, which you can download in full here.

A CSV export of the findings of the Earth project

If the Export button is greyed out, your licence does not include the option to export data to CSV files.

If you realise that a violation found during an analysis is not justified, you can relax it from the Findings tab of the Explorer.

In the example below, a we consider that a Backward goto violation should not be reported, because it is a false positive. Let's start by locating the violation in the Findings tab:

The backward goto violation we want to relax

When you hover over the menu icon for the violation, you can display a context menu that allows you to change the status of the finding:

The finding context menu

Click Change Status... to view the available statuses for the violation.

The Change Status Popup

Type a justification or comment for the relaxation and choose from one of the reasons for relaxing the violation:

Normal is the default status for new findings, which means no relaxation

Derogation means that you are relaxing a true violation for an exceptional reason

False positive can be used to work around a violation that was falsely detected by a data provider

Legacy system is used when a violation is detected in a piece of code that was analysed but cannot or will not be fixed.

In our example, select False Positive, enter a comment and click Change. The Findings page will reload and the violation will be gone from the list.

The updated findings list after relaxing the backwards goto

Relaxed findings are never deleted. If you want to review the list of findings that were relaxed in your project, adjust the filter on the Findings tab to display relaxed findings, as shown below;

The filtered list of findings for the project, including the backwards goto false positive

You can relax an individual finding, all findings for an artefact, or an entire rule at once. Note that instead of relaxing a rule.

Note that you can also relax artefacts from the Artefact Tree (see the section called “Relaxing Artefacts”) deactivate rules by using the Analysis Model Editor (see the section called “Analysis Model Editor”).

Squore provides a violation relaxation mechanism that is triggered via comments found in the source code itself. There are two pre-requisites for relaxation to work:

The model used to analyse your source code must implement a special rule called R_RELAX for relaxation to take place.

You need to know the mnemonic of the violated rule you want to relax, in order to use it as a key in your comment.

Squore interprets comments formatted in one of these three ways:

Inline Relaxation

This syntax is used to relax violations on the current line.

some code; /* %RELAX<keys> : Text to justify the relaxation */

Relax Next Line

This syntax is used to relax a violation on the first following line that is not a comment. In the example the text of the justification will be: "Text to justify the relaxation the text of the justification continues while lines are made of comments only"

/* >RELAX<keys> : Text to justify the relaxation */ /* the text of the justification continues while */ /* lines are made of comments only */ some code;

Block Relaxation

This syntax is used to relax violations in an entire block of code.

/* {{ RELAX<keys> : Text to justify the relaxation */

/* like for format 2 text can be on more than one line */

int my_func() {

/* contains many violations */

...

}

/* }} RELAX<keys> */<keys> can be one of the following:

<*>: relax all violations

<MNEMO>: relax violations of the rule MNEMO

<MNEMO1,MNEMO2,...,MNEMOn>: relax violations of rules MNEMO1 and MNEMO2 ... and MNEMOn

The relaxed violations are still shown in the Findings page after the next analysis, but they appear under the rule R_RELAX, showing the mnemonic of the relaxed violation and the justification text.

As an example, this is how you would relax the violations of the rule Backward goto for Maintainability Non-Conformity in Neptune:

click the violation of Backward goto on the Findings page to find the rule's mnemonic (BWGOTO) and the location of the finding (DB5_backup.c line 52).

The details of the Backward goto violation

Edit the code of the sample project to relax the violation as shown below.

goto loopwrite; /* %RELAX<BWGOTO> : This backward goto is acceptable in our code. */

Create a new version of the project.

On the Findings page, the violation now visible if you select to display derogations in the filter:

The relaxed violation is visible when displaying derogations

In this section, you will learn how to relax artefacts directly from the Artefact Tree instead of relaxing violations by editing the source code of the application. Relaxing artefacts ensures that their metrics do not impact the rating of the project, however, data providers will still generate findings for the relaxed artefacts.

This example uses the Mars project from the samples folder. Ensure that you are a Project Manager in this project, or are part of a role with the View Drafts of Projects and Modify Artefacts privileges before you begin.

Expand the Project Portfolios to show all the versions of Mars. There are two versions in the tree (from bottom to top):

For more information about the concepts behind baseline and draft versions, refer to the section called “Working with Draft and Baseline Versions”.

Click on Mars > Current to see the artefacts in the Mars project as created by the demo script:

The artefacts in the Current version of the Mars project and their rating

To relax an artefact and therefore tell Squore that its rating should not impact the rest of the project, display the context menu for this artefact. The relaxation options appear at the bottom of the menu if they are available for your model, as shown below:

The artefact context menu

There are two actions that can be taken to relax an artefact:

Relax... allows simply marking an artefact as relaxed, leaves it in the tree in a way that will not impact the overall rating of the project.

Exclude... also relaxes the artefact but then removes it from the Artefact Tree so it will not be visible anymore in future analyses.

In both cases, the relaxation action is only made on a draft version and can be reversed by selecting the Un-relax... entry in the menu or the Clear unapplied changes option in the project portfolio.

Clicking Relax... or Exclude... brings up a pop-up menu where you can type

a comment to explain the reason for the relaxation. Let's relax mars_common.c so it stops impacting the

overall project rating. Click the Relax... option in the menu to display the relaxation

popup and enter a relaxation comment:

The relaxation justification

Click Confirm to save your comment, and notice how the Artefact Tree is updated to reflect the finding's status:

The relaxed mars_common.c in the Artefact Tree

Other users can review the justification for the relaxation by clicking on the Log... item in the artefact context menu:

The log of changes for the artefact mars_common.c

If you keep relaxing artefacts in this project and create a new draft build of the project, then you will end up seeing changes in the overall rating,

When you relax an artefact, the action items and findings relevant to this artefact are hidden, except when you specifically click on the relaxed artefact. If you want to show them, you can do so by clicking the Include Relaxed Artefacts option from the Explorer Settings menu.

You can show or hide relaxed and excluded artefacts by checking the boxes with the appropriate status in the filter popup:

The artefact statuses shown by default in the Artefact Tree

If you notice that a violation in the code or an issue in the project was not detected during an analysis, you can decide to create a finding manually from the Artefact Tree.

This feature, like the creation of manual artefacts (see the section called “Adding and Removing Artefacts Manually”) is only available if your model was configured to support it. Consult your Squore administrator to verify if it is available in your configuration.

In this example, we add a finding to notify of a documentation issue in the Neptune project. Click on the Current version of the project, and display the context menu for the artefact where you consider that the documentation is wrong.

When you click the Add a finding... option, a dialog appears and lets you select the type of finding to add, as well as a description of the issue:

Click Add to save the finding. You can check that it was added successfully in the Findings tab of the Explorer:

Manual findings are displayed automatically in the Findings tab like other findings. If you want to filter them, use the advanced filter and select or exclude [Manual] in the Data Provider category.

Like regular findings, yout finding also displays in the source code viewer, as show below:

Squore lets you view and edit project attributes in a dedicated form tab of the explorer. You can therefore design your wizards to present checklists to a user. They can fill in the values manually after an analysis and they will be taken into account when creating the next version of the project. There are permissions associated with editing form values, so you can make them read-only for guests but read-write for project managers. The attributes displayed on the Forms tab depend on the type of the current artefact, and values are saved individually for each artefact in the project.

To begin working with forms, make sure you select the Current version of the project in the tree and that the Forms tab is visible in the Explorer.

When you click a project in the Project Portfolios and view the Forms tab of the Explorer, all the project attributes available at application level are displayed, as shown below:

The Forms tab for the Neptune project at application level

The values displayed correspond to the application attributes passed when the last version of Neptune was created. Users with the whose role grants them the Modify Artefacts Attributes privilege can edit the current value of the form for any artefact, and the value will be taken into account during the next analysis.

When you modify the values in the form, you can use the comment field to justify the change you made. A history of the modifications can be displayed by expanding the attribute field, as shown below

A history of modifications for the Test Coverage attributes

If you have doubts about the measures computed by Squore and their meaning, they can usually be solved by looking at the Measures tab of the Explorer. The content of the measures tab is also always refreshed to reflect the data for the current artefact, and is organised in a table displaying the measure's mnemonic, full name, description and value for the current selection, as shown in the picture below.

The table of measures for the DB5_backup.c

Measures can be sorted by mnemonic, name, description or value, and the sorting value is remembered when selecting another artefact in the tree so you can easily compare values.

The table also tells you which Data Provider reported the metric and its status in the latest analysis, so you can determine if a metric was computed or has its default value from the analysis model. The possible status values are: